Cybersecurity for Higher Ed: 7 Top Vulnerabilities and How AI Can Address Them

Learn 7 critical vulnerabilities in cybersecurity for higher education and how AI addresses faculty account compromise and phishing.

March 15, 2026

Higher education institutions manage a unique security equation: protect sensitive research, student records, and financial data while keeping networks open for collaboration across students, faculty, staff, and external partners. That openness creates inconsistent user behavior, decentralized IT ownership, and trusted relationships that attackers can exploit.

Email remains a primary entry point for cyberattacks in higher ed, and the human element contributes to 60% of breaches. AI-powered behavioral analysis helps close key gaps by learning what normal looks like for each role and flagging identity and messaging anomalies that static rules miss.

1. Compromised Faculty Accounts Create Outsized Risk

Faculty email accounts are a target of targeted attacks because threat actors weaponize institutional trust rather than exploit a single technical flaw. When attackers obtain faculty credentials through phishing, adversary-in-the-middle (AiTM) techniques, or consent-abuse tactics, they gain access to a trusted identity that can reach payroll systems, research contacts, departmental administrators, and external collaborators. Understanding how account takeover attacks work is critical for any university security team.

That risk compounds in higher ed because faculty sit at the intersection of sensitive data and open collaboration. They approve requests, introduce vendors, share documents with partners, and communicate across multiple departments. A single compromised mailbox can become an internal launch point for impersonation, lateral phishing, and quiet data harvesting that blends into normal academic traffic.

Why Behavioral Baselines Matter

Compromised accounts often behave "correctly" from a pure access-control perspective. Once an attacker logs in with valid credentials, many traditional controls treat the authenticated user as legitimate and move on, even if the intent behind that access is malicious.

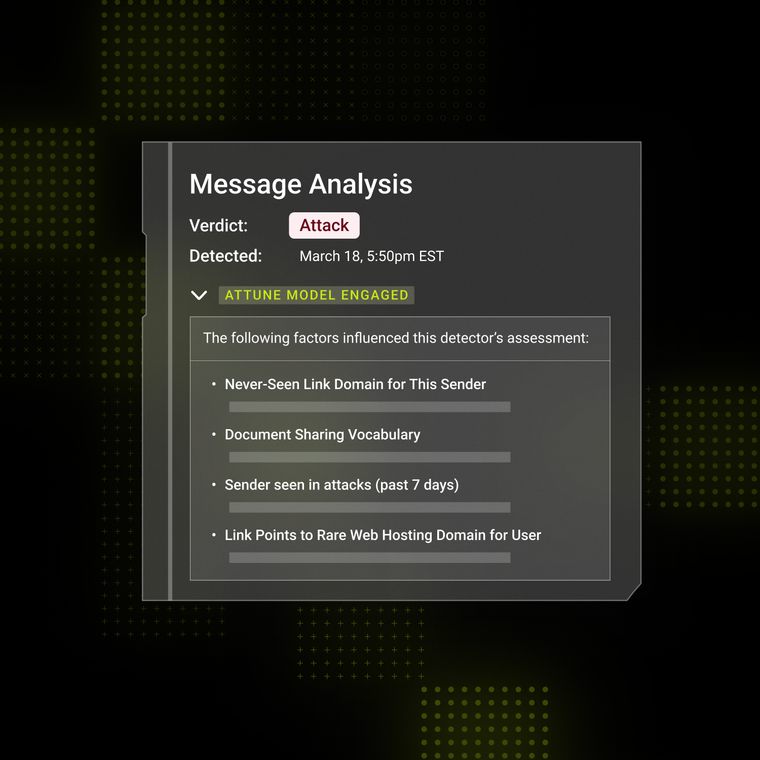

Behavioral analysis addresses this gap by learning how an individual faculty member typically communicates and then highlighting deviations worth investigating. Security teams can focus review on accounts that suddenly send unusually urgent messages, introduce new external recipients not seen in that person's history, or create forwarding rules inconsistent with prior behavior.

This approach is especially valuable on campuses because "normal" varies across departments, research domains, and the time of year. Baselining reduces over-reliance on generic thresholds and helps teams focus on activity that is truly anomalous for a specific identity.

2. Academic Language Provides Perfect Phishing Cover

Campus communication norms provide attackers with effective camouflage. Universities use a formal tone, predictable workflows (approvals, reimbursements, committee decisions), and high-trust relationships, making social engineering feel routine rather than suspicious. When an attacker writes in the voice of a dean, department chair, or IT administrator, recipients often prioritize responsiveness over skepticism. These credential harvesting tactics succeed because they mirror legitimate campus workflows.

Attackers also benefit from the diversity of legitimate external communication in higher ed. Faculty and staff routinely interact with publishers, conference organizers, visiting scholars, donors, and vendors, making it harder to treat unknown senders as automatically suspicious. EDUCAUSE highlights how attackers leverage that trust in higher ed environments, especially when compromised .edu senders get treated as "safe" by busy recipients.

Detecting Threats Beyond Content Scanning

Content scanning alone often misses campus phishing because the message body can look legitimate, grammatically correct, and aligned with internal workflows. Modern attacks focus on context: who the sender typically talks to, how they phrase requests, and whether the requested action fits the relationship.

Context analysis evaluates signals around the message, not just the words inside it. It can surface risk when a sender suddenly reaches out to a new set of recipients, shifts from informational emails to transactional requests, or sends at times that don't match prior patterns. It can also flag inconsistencies in relationship history, such as a "department chair" who has never emailed a specific finance processor but now requests urgent payment changes.

This matters for cybersecurity for higher ed because universities cannot simply block all unfamiliar senders or aggressively quarantine atypical language without disrupting legitimate teaching and research.

3. Educational Platforms Become Attack Infrastructure

Educational platforms, including learning management systems, HR tools, and departmental forms, can become an attacker infrastructure when threat actors abuse trusted domains and legitimate workflows. Attackers don't always need to "hack" the platform directly. If they compromise a user account, they can create believable content within trusted tools, then use email to lure victims into those environments, thereby inheriting institutional credibility in the process.

Platform Exploitation and Payroll Fraud

Platform exploitation becomes especially damaging when attackers pair it with operational fraud. A common pattern starts in email: an attacker impersonates an employee or administrator, then uses trusted links or platform notifications to move the victim into a workflow that feels routine.

From there, attackers may attempt to redirect payroll, submit fraudulent reimbursement requests, or capture credentials via embedded forms. This pattern closely mirrors vendor email compromise tactics seen across industries. Ren-ISAC has warned about LMS-driven email abuse in education environments, where high-volume messaging from a trusted platform can amplify a compromise quickly.

While payroll changes occur within HR or finance systems, the setup and authorization often begin with email-based social engineering, making the inbox a critical control point for investigating the initiating message, the sender identity, and the request context.

4. Diverse Campus Populations Create Security Blind Spots

Universities inherit security blind spots because campus populations behave differently by design. Undergraduates, graduate researchers, faculty, staff, clinicians, contractors, and visiting scholars all use the same identity infrastructure, but their "normal" looks nothing alike.

When a security program assumes uniform behavior, it creates two problems at once: it misses targeted attacks aimed at a specific segment, and it generates noise when legitimate activity looks anomalous compared to a generic baseline. Here's why one-size-fits-all baselines break down across campus roles:

Undergraduate students: High-volume, short-lived interactions across courses and clubs, frequent device changes, and common password resets.

Graduate researchers: Regular access to specialized systems, external collaborators, and long-running projects with changing membership.

Faculty: Broad communication networks, authority signals that attackers exploit, and frequent approval workflows.

Administrative staff: Predictable finance and HR processes that attackers target with impersonation and payment-change requests.

External affiliates: Alumni, contractors, and visiting scholars who may retain access or use unmanaged devices.

EDUCAUSE continues to highlight gaps in campus security awareness, including student-focused training, which can increase exposure in the largest user segment.

Adapting Detection to Individual Behavior

Adaptive detection works better in higher ed because it starts from the reality that identities behave differently across roles and time. Instead of building a single profile for "a university user," effective programs learn per-user and per-group patterns, then investigate meaningful deviations.

Adaptive detection also supports security operations by reducing alert fatigue. When the system understands that registration week and finals week change traffic patterns, teams can focus on relationship anomalies rather than being overwhelmed by volume spikes. Paired with role-based controls like conditional access and step-up verification for high-risk actions, individualized baselines help cybersecurity for higher ed programs improve signal quality without imposing blanket restrictions.

5. Academic Openness Conflicts with Security Lockdowns

Research collaboration requires information sharing, network accessibility, and international partnerships that conflict with traditional security lockdown measures. Universities support complex interdependencies across multi-institutional networks while running experimental software in research environments. Heavy-handed security controls disrupt legitimate academic work and create pressure to bypass measures or maintain overly permissive access policies.

Context-Aware Protection Preserves Academic Freedom

Context-aware protection focuses controls on risky behavior rather than restricting broad categories of activity. Instead of blocking collaboration, it helps security teams identify when collaboration patterns shift in ways that suggest compromise.

For example, context-aware approaches can flag when an identity begins making unusually urgent requests, introduces unfamiliar external recipients, or changes how it routes sensitive messages. In research environments, it can distinguish legitimate cross-institutional communication from suspicious "new partner" outreach that appears suddenly and requests access.

Email remains a common source of friction in these environments because it sits at the center of approvals, access requests, and third-party introductions. When defenders improve the ability to recognize anomalous email behavior, they often reduce the need for disruptive lockdown responses elsewhere.

6. Traditional Detection Struggles Against AI-Powered Attacks

Traditional detection models often struggle in higher ed because modern attacks adapt quickly and university environments vary widely across departments. Static rules and known-bad signatures still add value, but they may not reliably detect novel social engineering, subtle account-takeover behavior, or context-driven impersonation. As AI-generated phishing becomes more common, the gap between legacy detection and the sophistication of modern attacks continues to widen.

Organizations that deploy AI and automation extensively have reduced breach costs by nearly $1.9 million compared to those that don't, which helps explain why security teams are investing in more adaptive detection.

Real-Time Anomaly Detection Closes the Gap

Real-time anomaly detection focuses on deviations from expected behavior rather than matching a known signature. That matters when an attacker uses a valid account, sends plausible text, and stays within normal infrastructure.

Anomaly-based approaches can surface issues like unusual forwarding behavior, unexpected spikes in outbound messages, sudden changes in who an identity communicates with, or suspicious "first-time" requests involving sensitive workflows. In higher ed, real-time detection also benefits from context about academic rhythms.

Registration, admissions, financial aid, and semester transitions all change email volume and workflow urgency. Detection that understands those rhythms can reduce false alarms while still highlighting relationship anomalies that don't fit the season.

7. Security Tools Miss University-Specific Rhythms

University-specific rhythms create predictable windows attackers can exploit, and generic tools rarely model those patterns well. High-volume periods like onboarding, course registration, and financial aid processing change who emails whom, how quickly approvals are processed, and what "urgent" means.

Attackers take advantage by timing impersonation and credential phishing campaigns to moments when staff expect unusual requests and have less time to verify them. These campaigns often fall under business email compromise, where the attacker's goal is to manipulate a trusted workflow rather than deliver malware. The FBI reported BEC losses totaling $2.77 billion in a recent year, underscoring why operationally realistic impersonation remains so persistent.

Training Models on Academic Patterns

Detection models trained on academic patterns improve accuracy by separating legitimate seasonal spikes from coordinated abuse. Academic-aware detection looks at who initiates requests, how those requests compare to prior behavior, and whether the sender-recipient relationship makes sense.

Universities can reinforce these benefits by pairing detection improvements with process controls. Simple measures like out-of-band verification for payment changes and standardized reporting paths for suspicious requests help ensure that detection signals turn into timely action rather than lingering alerts.

Building Security Awareness That Fits Campus Culture

Security awareness works best in higher ed when it reflects campus roles, norms, and constraints, rather than treating every user the same. Faculty respond to different incentives than students, and administrative staff face different pressures than research teams. Role-based programs, supported by an awareness structure, can reduce risky behavior without overloading users with generic compliance messaging.

Students represent a large, constantly renewing population and often rely on unmanaged personal devices. Effective student programs embed security into existing touchpoints—orientation, residence life programming, and LMS announcements—rather than relying on optional trainings.

Phishing simulations also help, especially when they follow progressive difficulty. SANS provides practical phishing guidance on building simulations that teach recognition without creating a punitive dynamic.

Measuring beyond click rates helps security leaders understand whether awareness programs change behavior over time. More useful measures include reporting rates, time-to-report, and the types of messages users flag—metrics that show whether the campus community acts as a detection layer, not just whether individuals avoid mistakes. For a deeper look at what employees should recognize, see this overview of email cybersecurity threats.

How Abnormal Addresses Higher Education Security Challenges

Abnormal helps strengthen cybersecurity for higher ed by adding an identity- and behavior-focused layer on top of existing email security controls. Abnormal's Behavioral AI learns normal communication patterns across the institution, validates sender-identity signals, and analyzes message intent to surface advanced social-engineering and account-takeover activity.

The platform helps identify threats that commonly impact universities, including credential phishing, impersonation that exploits institutional trust, and compromised accounts used to target students, staff, and external partners.

Abnormal's approach to AI email security is designed for environments where attack surfaces are broad and user behavior is highly variable. Because higher ed has a broad email attack surface spanning departments and affiliates, reducing noisy alerts while preserving visibility is a practical requirement.

Abnormal integrates with existing campus IT workflows and supports audit-ready reporting for compliance needs such as FERPA. Recognized as a Leader in the 2025 Gartner® Magic Quadrant™ for Email Security, Abnormal enhances existing security frameworks rather than requiring disruptive infrastructure changes.

Want to learn how Abnormal can help protect your institution against sophisticated university-targeted attacks? Request a demo to discover how Behavioral AI addresses the unique cybersecurity challenges facing higher education.

Related Posts

Get the Latest Email Security Insights

Subscribe to our newsletter to receive updates on the latest attacks and new trends in the email threat landscape.